from modelscope import snapshot_download

MODEL_PATH = snapshot_download('THU-MIG/Yolov10')YOLOv10发布,性能效率双提升,魔搭社区最佳实践来啦!

YOLO(You Only Look Once)系列目标检测框架,由于其在计算成本与检测性能之间实现了有效平衡,故而成为实时物体检测领域的标杆。

YOLO(You Only Look Once)系列目标检测框架,由于其在计算成本与检测性能之间实现了有效平衡,故而成为实时物体检测领域的标杆。

YOLO系列算法经过不断地发展和改进,已经在架构设计、优化目标、数据增强策略等方面取得了显著的进展。然而,由于非最大抑制(NMS)后处理依赖,导致YOLO系列算法难以实现端到端部署,并且增加了推理延迟,对性能产生了负面影响。此外,YOLO系列算法中的一些组件设计存在冗余,限制了模型的性能,因此还有很大的改进空间。

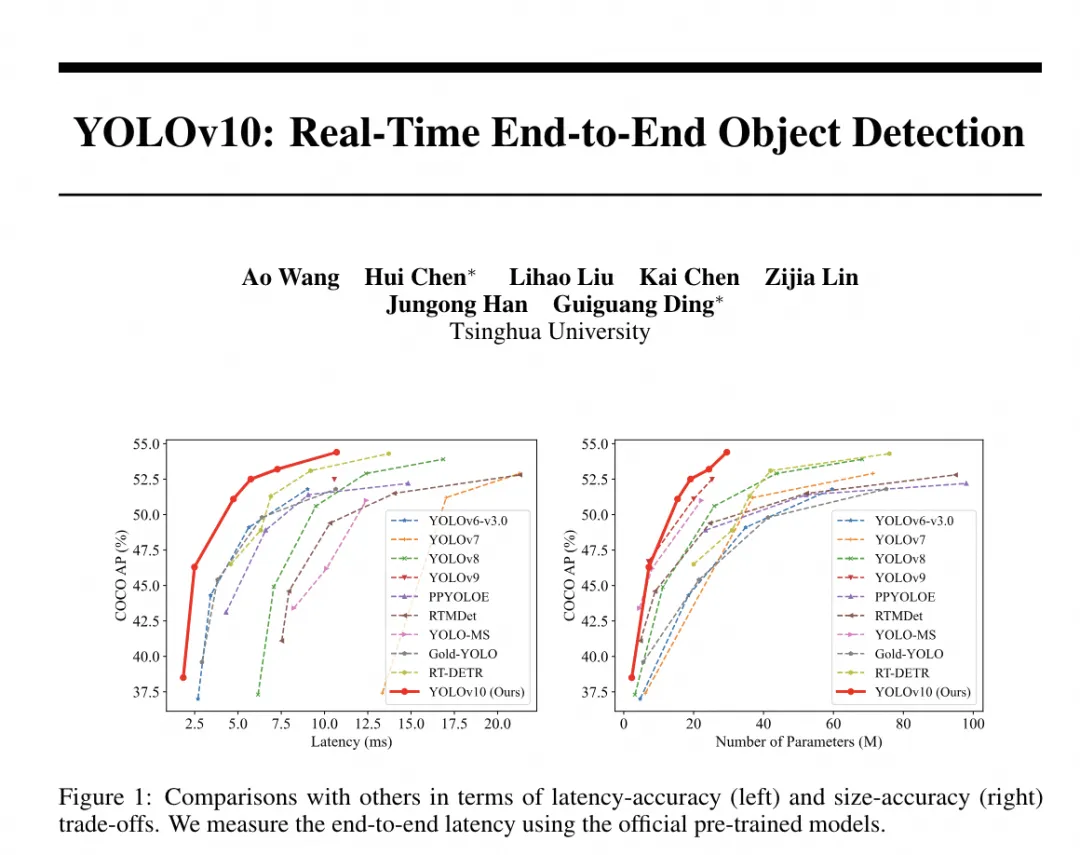

自今年2月YOLOv9发布之后,YOLO系列的接力到清华大学THU-MIG实验室。在这项工作中,研究团队旨在从后处理和模型架构两个方面进一步提升YOLO 的性能效率边界。清华大学THU-MIG实验室首先提出了用于 YOLO 无 NMS 训练的一致对偶分配,这同时带来了具有竞争力的性能和较低的推理延迟。此外,研究团队引入了整体效率-准确度驱动的 YOLO 模型设计策略。

从效率和准确度的角度全面优化了 YOLO 的各个组件,大大降低了计算开销并提高了性能。并发布新一代用于实时端到端物体检测的 YOLO 系列,称为 YOLOv10。大量实验表明,YOLOv10 在各种模型规模上都实现了最先进的性能和效率。例如,YOLOv10-S 为 1.8×在 COCO 上相似的 AP 下比 RT-DETR-R18 更快,同时享受 2.8×参数和 FLOP 数量更少。与 YOLOv9-C 相比,在相同性能下,YOLOv10-B 的延迟减少了 46%,参数减少了 25%。

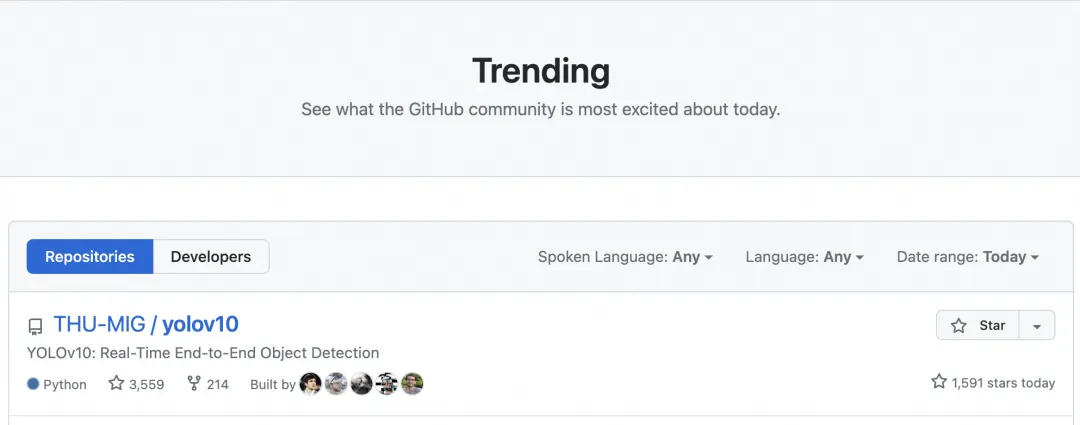

今天,YOLOv10项目登顶github global Trending榜,收到了来自全球开发者对其的认可。

论文地址:

https://arxiv.org/pdf/2405.14458

项目地址:

https://github.com/THU-MIG/yolov10

模型下载

YOLOV10现已开源到魔搭社区,欢迎开发者下载使用!

模型地址:

https://modelscope.cn/models/THU-MIG/Yolov10

模型推理

本文在魔搭社区免费提供的GPU免费算力上体验:

推理代码:

# 安装依赖

!pip install supervision git+https://github.com/THU-MIG/yolov10.git

# 下载模型

from modelscope import snapshot_download

MODEL_PATH = snapshot_download('THU-MIG/Yolov10')

# 推理代码

from ultralytics import YOLOv10

import supervision as sv

import cv2

from IPython.display import Image

#下载示例图片

!wget -P /mnt/workspace/ -q https://modelscope.oss-cn-beijing.aliyuncs.com/resource/image_detection.png

IMAGE_PATH = '/mnt/workspace/image_detection.png'

model = YOLOv10(f'{MODEL_PATH}/yolov10n.pt')

image = cv2.imread(IMAGE_PATH)

results = model(source=image, conf=0.25, verbose=False)[0]

detections = sv.Detections.from_ultralytics(results)

box_annotator = sv.BoxAnnotator()

category_dict = {

0: 'person', 1: 'bicycle', 2: 'car', 3: 'motorcycle', 4: 'airplane', 5: 'bus',

6: 'train', 7: 'truck', 8: 'boat', 9: 'traffic light', 10: 'fire hydrant',

11: 'stop sign', 12: 'parking meter', 13: 'bench', 14: 'bird', 15: 'cat',

16: 'dog', 17: 'horse', 18: 'sheep', 19: 'cow', 20: 'elephant', 21: 'bear',

22: 'zebra', 23: 'giraffe', 24: 'backpack', 25: 'umbrella', 26: 'handbag',

27: 'tie', 28: 'suitcase', 29: 'frisbee', 30: 'skis', 31: 'snowboard',

32: 'sports ball', 33: 'kite', 34: 'baseball bat', 35: 'baseball glove',

36: 'skateboard', 37: 'surfboard', 38: 'tennis racket', 39: 'bottle',

40: 'wine glass', 41: 'cup', 42: 'fork', 43: 'knife', 44: 'spoon', 45: 'bowl',

46: 'banana', 47: 'apple', 48: 'sandwich', 49: 'orange', 50: 'broccoli',

51: 'carrot', 52: 'hot dog', 53: 'pizza', 54: 'donut', 55: 'cake',

56: 'chair', 57: 'couch', 58: 'potted plant', 59: 'bed', 60: 'dining table',

61: 'toilet', 62: 'tv', 63: 'laptop', 64: 'mouse', 65: 'remote', 66: 'keyboard',

67: 'cell phone', 68: 'microwave', 69: 'oven', 70: 'toaster', 71: 'sink',

72: 'refrigerator', 73: 'book', 74: 'clock', 75: 'vase', 76: 'scissors',

77: 'teddy bear', 78: 'hair drier', 79: 'toothbrush'

}

labels = [

f"{category_dict[class_id]} {confidence:.2f}"

for class_id, confidence in zip(detections.class_id, detections.confidence)

]

annotated_image = box_annotator.annotate(

image.copy(), detections=detections, labels=labels

)

cv2.imwrite('annotated_demo.jpeg', annotated_image)

Image(filename='annotated_demo.jpeg', height=600)

模型训练

数据集链接:

https://modelscope.cn/datasets/AI-ModelScope/tumor-dj2a1

下载数据集

!mkdir /mnt/workspace/datasets

%cd /mnt/workspace/datasets

# Refer to: https://modelscope.cn/datasets/AI-ModelScope/tumor-dj2a1/summary

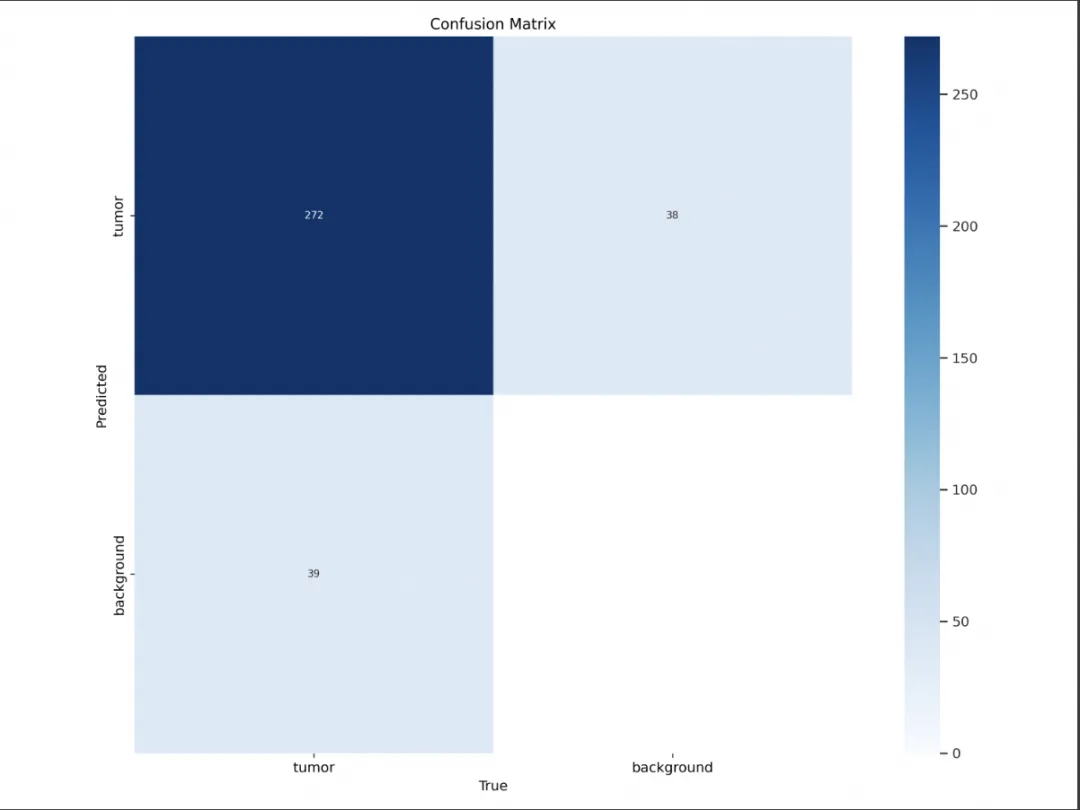

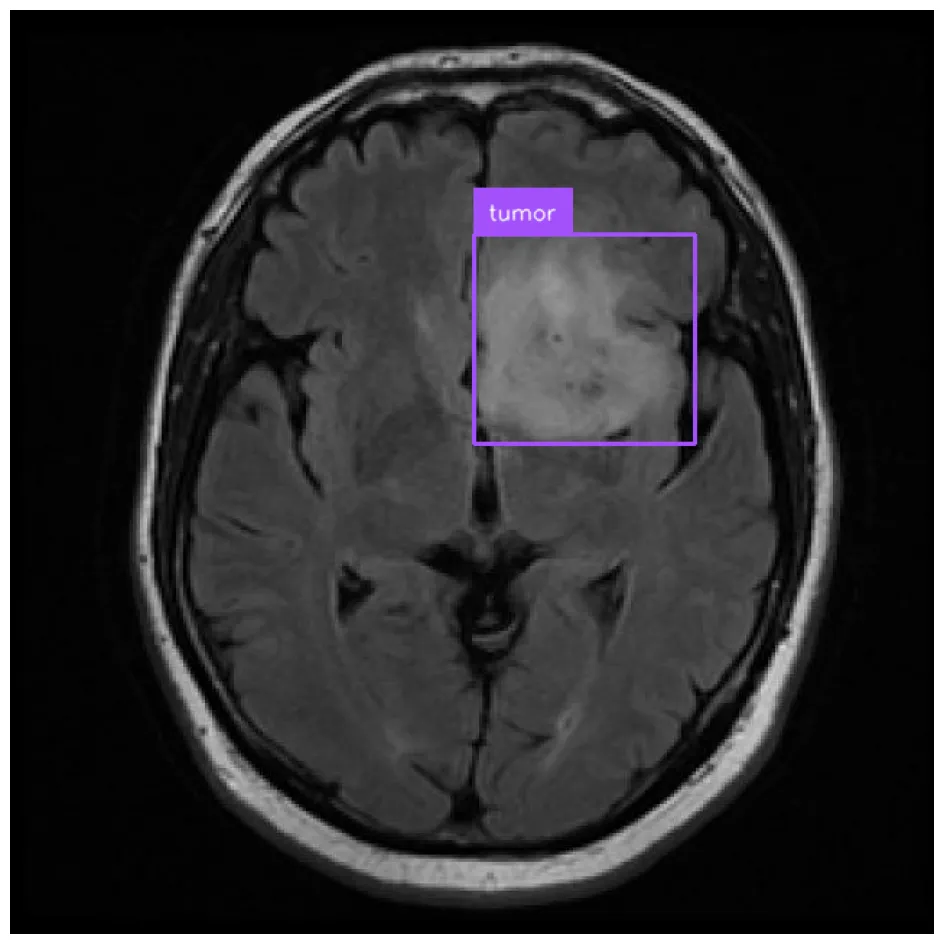

!git clone https://www.modelscope.cn/datasets/AI-ModelScope/tumor-dj2a1.git模型定制

%cd /mnt/workspace/

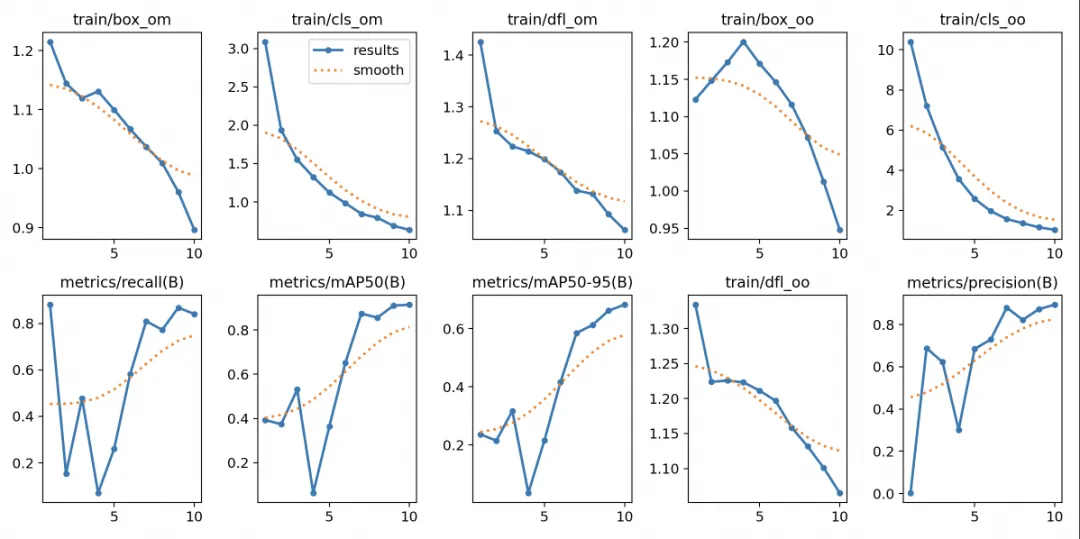

!yolo task=detect mode=train epochs=10 batch=32 plots=True \

model={MODEL_PATH}/yolov10n.pt \

data=/mnt/workspace/datasets/tumor-dj2a1/data.yaml检测分类的混淆矩阵如下:

训练的各项loss以及各个评估指标如下 :

定制模型推理:

from ultralytics import YOLOv10

model = YOLOv10('/mnt/workspace/runs/detect/train2/weights/best.pt')

dataset = sv.DetectionDataset.from_yolo(

images_directory_path="/mnt/workspace/datasets/tumor-dj2a1/valid/images",

annotations_directory_path="/mnt/workspace/datasets/tumor-dj2a1/valid/labels",

data_yaml_path="/mnt/workspace/datasets/tumor-dj2a1/data.yaml"

)

bounding_box_annotator = sv.BoundingBoxAnnotator()

label_annotator = sv.LabelAnnotator()import random

random_image = random.choice(list(dataset.images.keys()))

random_image = dataset.images[random_image]

results = model(source=random_image, conf=0.25)[0]

detections = sv.Detections.from_ultralytics(results)

annotated_image = bounding_box_annotator.annotate(

scene=random_image, detections=detections)

annotated_image = label_annotator.annotate(

scene=annotated_image, detections=detections)

sv.plot_image(annotated_image)Output:

0: 640x640 1 tumor, 6.4ms

Speed: 1.2ms preprocess, 6.4ms inference, 0.8ms postprocess per image at shape (1, 3, 640, 640)

本文微调示例参考:https://colab.research.google.com/github/roboflow-ai/notebooks/blob/main/notebooks/train-yolov10-object-detection-on-custom-dataset.ipynb

点击链接👇直达原文

更多推荐

已为社区贡献652条内容

已为社区贡献652条内容

所有评论(0)