DeepSeek开源Janus-Pro多模态理解生成模型,魔搭社区推理、微调最佳实践

Janus-Pro是DeepSeek最新开源的多模态模型,是一种新颖的自回归框架,统一了多模态理解和生成。

01.引言

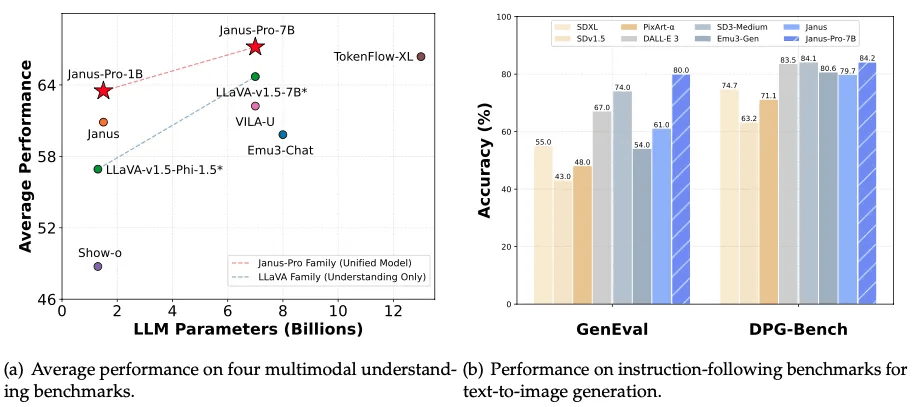

Janus-Pro是DeepSeek最新开源的多模态模型,是一种新颖的自回归框架,统一了多模态理解和生成。通过将视觉编码解耦为独立的路径,同时仍然使用单一的、统一的变压器架构进行处理,该框架解决了先前方法的局限性。这种解耦不仅缓解了视觉编码器在理解和生成中的角色冲突,还增强了框架的灵活性。Janus-Pro 超过了以前的统一模型,并且匹配或超过了特定任务模型的性能。Janus-Pro 的简洁性、高灵活性和有效性使其成为下一代统一多模态模型的强大候选者。

代码链接:

https://github.com/deepseek-ai/Janus

模型链接:

https://modelscope.cn/collections/Janus-Pro-0f5e48f6b96047

体验页面:

https://modelscope.cn/studios/AI-ModelScope/Janus-Pro-7B

Janus-Pro 是一个统一的理解和生成 MLLM,它将视觉编码解耦以支持多模态理解和生成。Janus-Pro 基于 DeepSeek-LLM-1.5b-base/DeepSeek-LLM-7b-base 构建。

对于多模态理解,它使用 SigLIP-L 作为视觉编码器,支持 384 x 384 图像输入。对于图像生成,Janus-Pro 使用来自LlamaGen的分词器,降采样率为 16。

02.模型效果

图片生成

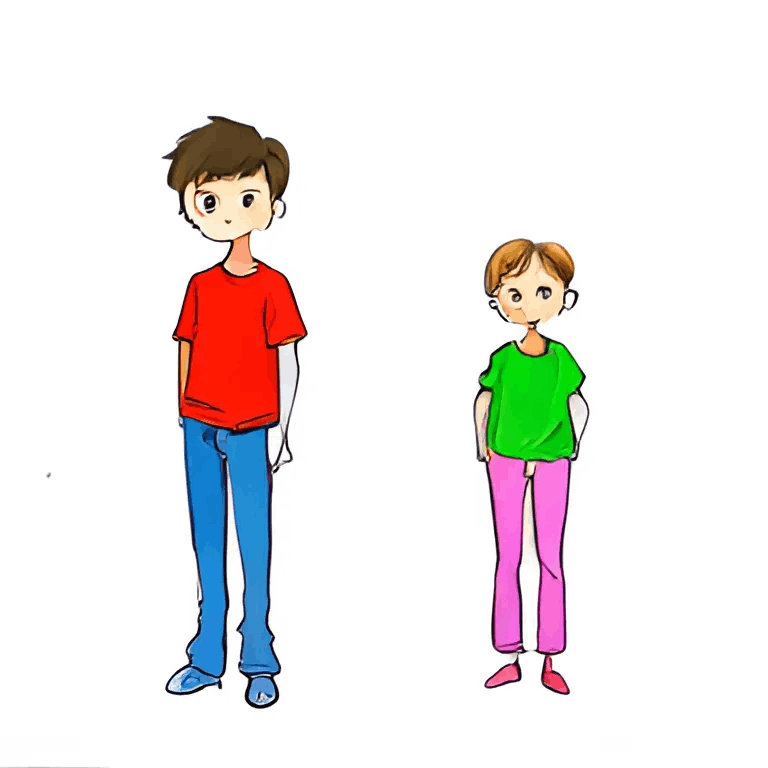

简单 Prompt

|

an apple |

a car |

a dog |

|

|

|

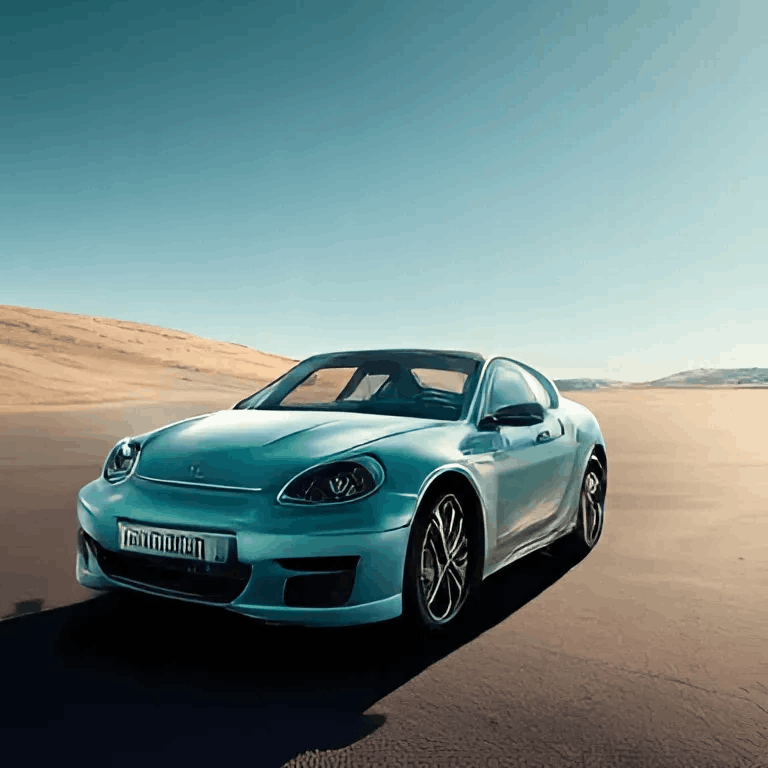

复杂 Prompt

|

a bear standing on a car, sunset, winter |

a boy and a girl, the boy stands at the left side, the boy wears a red t-shirt and blue pants, the girl wears a green t-shirt and pink pants. |

the apple is in the box, the box is on the chair, the chair is on the desk, the desk is in the room |

|

|

|

颜色可以做到分开控制

多风格

|

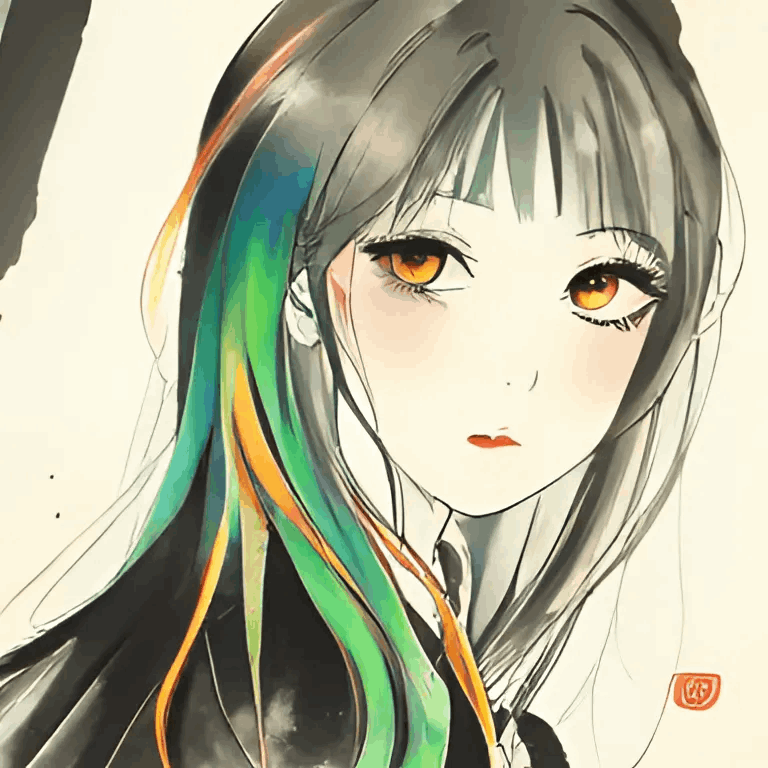

Chinese ink painting, a girl, long hair, colorful hair, shining eyes |

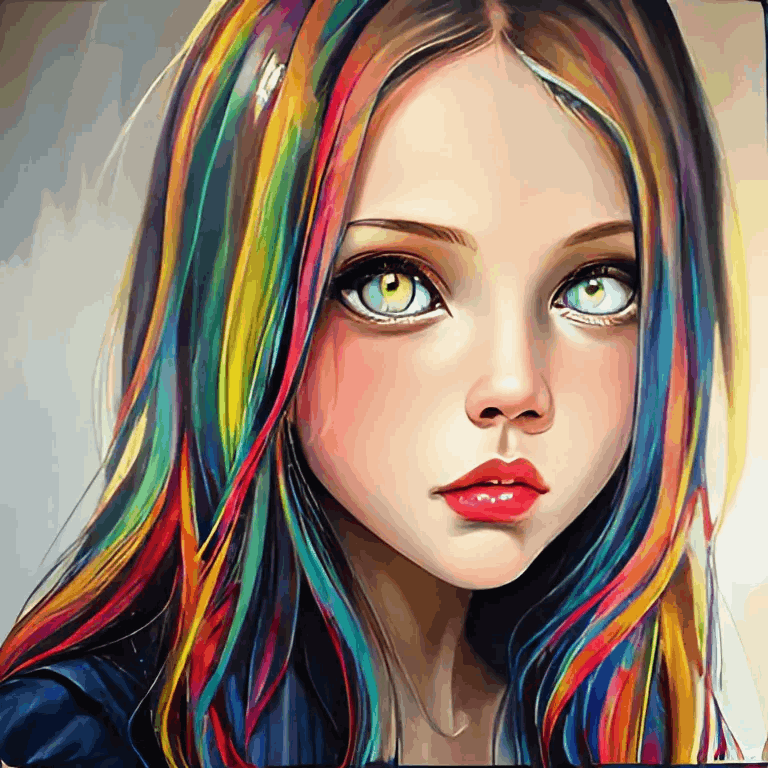

oil painting, a girl, long hair, colorful hair, shining eyes |

anime, a girl, long hair, colorful hair, shining eyes |

|

|

|

图片理解

识物

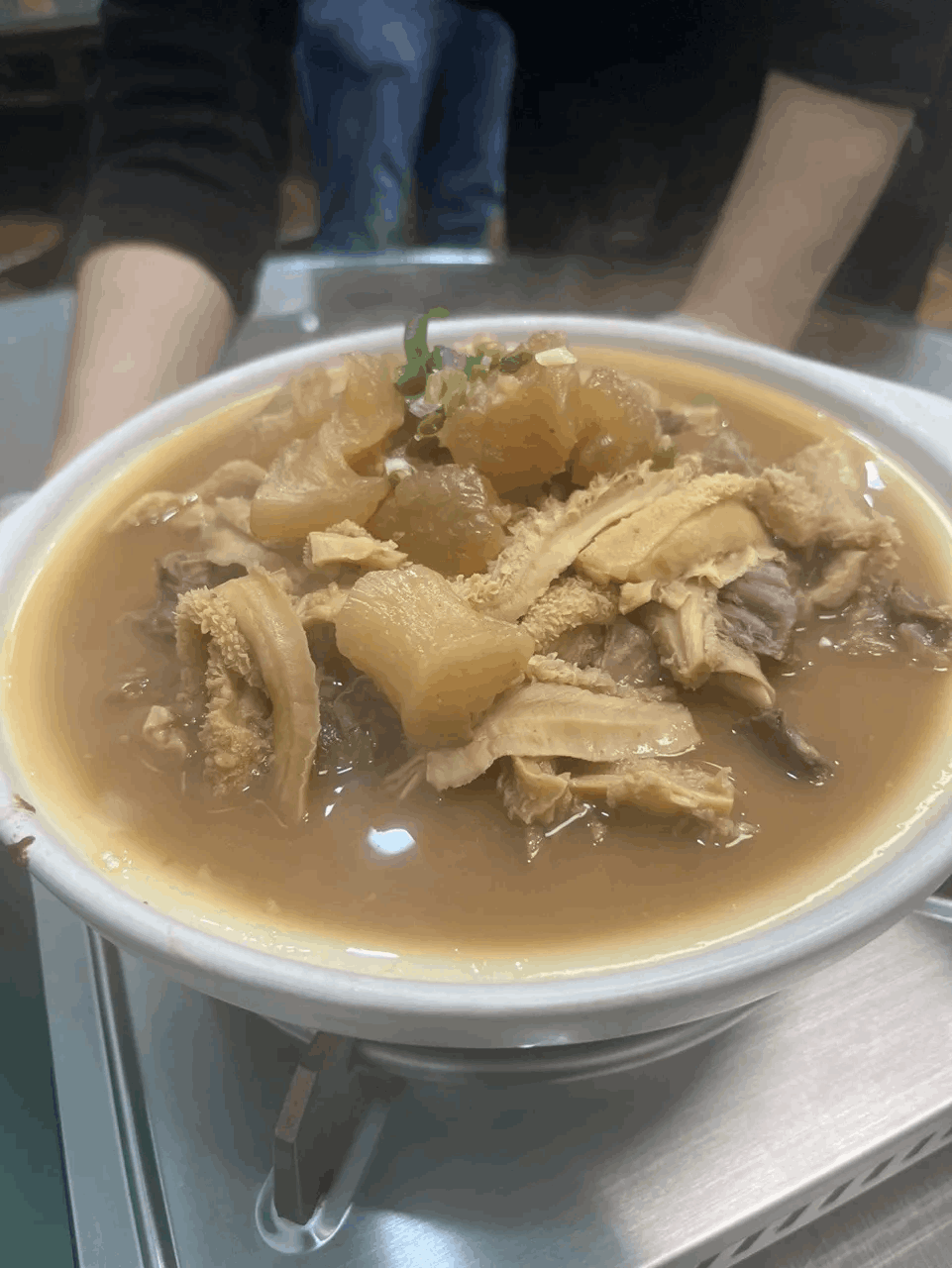

提问:

这道菜是什么?

回答:

这道菜看起来是一道中式炖菜,可能是“牛杂汤”或“牛杂火锅”。它通常由牛的各个部位的肉和内脏组成,如牛肚、牛肠、牛筋等。这些食材经过炖煮,味道浓郁,口感丰富。牛杂汤通常会加入一些香料和调味料,如八角、桂皮、花椒等,以增加风味。这道菜不仅味道鲜美,而且营养丰富,富含蛋白质和多种微量元素。

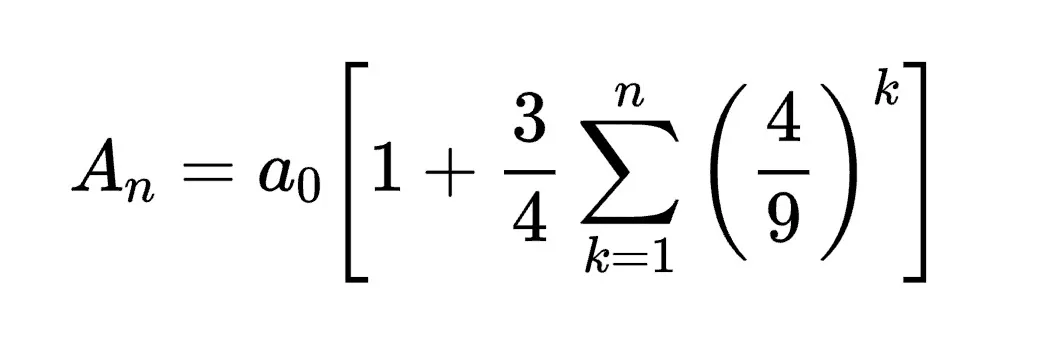

OCR&公式转换

提问:

Convert the formula into latex code.

回答:

Here is the formula converted into LaTeX code:

\[

A_n = a_0 \left[ 1 + \frac{3}{4} \sum_{k=1}^{n} \left( \frac{4}{9} \right)^k \right]

\]

03.模型推理

安装依赖

pip install git+https://github.com/deepseek-ai/Janus多模态理解:

import torch

from transformers import AutoModelForCausalLM

from janus.models import MultiModalityCausalLM, VLChatProcessor

from janus.utils.io import load_pil_images

from modelscope import snapshot_download

# specify the path to the model

model_path = snapshot_download("deepseek-ai/Janus-Pro-7B")

vl_chat_processor: VLChatProcessor = VLChatProcessor.from_pretrained(model_path)

tokenizer = vl_chat_processor.tokenizer

vl_gpt: MultiModalityCausalLM = AutoModelForCausalLM.from_pretrained(

model_path, trust_remote_code=True

)

vl_gpt = vl_gpt.to(torch.bfloat16).cuda().eval()

question = "discribe the image"

image = "/mnt/workspace/Janus/images/doge.png"

conversation = [

{

"role": "<|User|>",

"content": f"<image_placeholder>\n{question}",

"images": [image],

},

{"role": "<|Assistant|>", "content": ""},

]

# load images and prepare for inputs

pil_images = load_pil_images(conversation)

prepare_inputs = vl_chat_processor(

conversations=conversation, images=pil_images, force_batchify=True

).to(vl_gpt.device)

# # run image encoder to get the image embeddings

inputs_embeds = vl_gpt.prepare_inputs_embeds(**prepare_inputs)

# # run the model to get the response

outputs = vl_gpt.language_model.generate(

inputs_embeds=inputs_embeds,

attention_mask=prepare_inputs.attention_mask,

pad_token_id=tokenizer.eos_token_id,

bos_token_id=tokenizer.bos_token_id,

eos_token_id=tokenizer.eos_token_id,

max_new_tokens=512,

do_sample=False,

use_cache=True,

)

answer = tokenizer.decode(outputs[0].cpu().tolist(), skip_special_tokens=True)

print(f"{prepare_inputs['sft_format'][0]}", answer)多模态生成:

import os

import PIL.Image

import torch

import numpy as np

from transformers import AutoModelForCausalLM

from janus.models import MultiModalityCausalLM, VLChatProcessor

from modelscope import snapshot_download

# specify the path to the model

model_path = snapshot_download("deepseek-ai/Janus-Pro-7B")

vl_chat_processor: VLChatProcessor = VLChatProcessor.from_pretrained(model_path)

tokenizer = vl_chat_processor.tokenizer

vl_gpt: MultiModalityCausalLM = AutoModelForCausalLM.from_pretrained(

model_path, trust_remote_code=True

)

vl_gpt = vl_gpt.to(torch.bfloat16).cuda().eval()

conversation = [

{

"role": "<|User|>",

"content": "A stunning princess from kabul in red, white traditional clothing, blue eyes, brown hair",

},

{"role": "<|Assistant|>", "content": ""},

]

sft_format = vl_chat_processor.apply_sft_template_for_multi_turn_prompts(

conversations=conversation,

sft_format=vl_chat_processor.sft_format,

system_prompt="",

)

prompt = sft_format + vl_chat_processor.image_start_tag

@torch.inference_mode()

def generate(

mmgpt: MultiModalityCausalLM,

vl_chat_processor: VLChatProcessor,

prompt: str,

temperature: float = 1,

parallel_size: int = 16,

cfg_weight: float = 5,

image_token_num_per_image: int = 576,

img_size: int = 384,

patch_size: int = 16,

):

input_ids = vl_chat_processor.tokenizer.encode(prompt)

input_ids = torch.LongTensor(input_ids)

tokens = torch.zeros((parallel_size*2, len(input_ids)), dtype=torch.int).cuda()

for i in range(parallel_size*2):

tokens[i, :] = input_ids

if i % 2 != 0:

tokens[i, 1:-1] = vl_chat_processor.pad_id

inputs_embeds = mmgpt.language_model.get_input_embeddings()(tokens)

generated_tokens = torch.zeros((parallel_size, image_token_num_per_image), dtype=torch.int).cuda()

for i in range(image_token_num_per_image):

outputs = mmgpt.language_model.model(inputs_embeds=inputs_embeds, use_cache=True, past_key_values=outputs.past_key_values if i != 0 else None)

hidden_states = outputs.last_hidden_state

logits = mmgpt.gen_head(hidden_states[:, -1, :])

logit_cond = logits[0::2, :]

logit_uncond = logits[1::2, :]

logits = logit_uncond + cfg_weight * (logit_cond-logit_uncond)

probs = torch.softmax(logits / temperature, dim=-1)

next_token = torch.multinomial(probs, num_samples=1)

generated_tokens[:, i] = next_token.squeeze(dim=-1)

next_token = torch.cat([next_token.unsqueeze(dim=1), next_token.unsqueeze(dim=1)], dim=1).view(-1)

img_embeds = mmgpt.prepare_gen_img_embeds(next_token)

inputs_embeds = img_embeds.unsqueeze(dim=1)

dec = mmgpt.gen_vision_model.decode_code(generated_tokens.to(dtype=torch.int), shape=[parallel_size, 8, img_size//patch_size, img_size//patch_size])

dec = dec.to(torch.float32).cpu().numpy().transpose(0, 2, 3, 1)

dec = np.clip((dec + 1) / 2 * 255, 0, 255)

visual_img = np.zeros((parallel_size, img_size, img_size, 3), dtype=np.uint8)

visual_img[:, :, :] = dec

os.makedirs('generated_samples', exist_ok=True)

for i in range(parallel_size):

save_path = os.path.join('generated_samples', "img_{}.jpg".format(i))

PIL.Image.fromarray(visual_img[i]).save(save_path)

generate(

vl_gpt,

vl_chat_processor,

prompt,

)04.模型微调

我们介绍使用ms-swift对deepseek-ai/Janus-Pro-7B进行微调(注意:目前只支持图像理解的训练而不支持图像生成)。这里,我们将展示可运行的微调demo,并给出自定义数据集的格式。

在开始微调之前,请确保您的环境已准备妥当。

# pip install git+https://github.com/modelscope/ms-swift.git

git clone https://github.com/modelscope/ms-swift.git

cd ms-swift

pip install -e .微调脚本如下:

CUDA_VISIBLE_DEVICES=0 \

swift sft \

--model deepseek-ai/Janus-Pro-7B \

--dataset AI-ModelScope/LaTeX_OCR:human_handwrite#20000 \

--train_type lora \

--torch_dtype bfloat16 \

--num_train_epochs 1 \

--per_device_train_batch_size 1 \

--per_device_eval_batch_size 1 \

--learning_rate 1e-4 \

--lora_rank 8 \

--lora_alpha 32 \

--target_modules all-linear \

--freeze_vit true \

--gradient_accumulation_steps 16 \

--eval_steps 100 \

--save_steps 100 \

--save_total_limit 2 \

--logging_steps 5 \

--max_length 2048 \

--output_dir output \

--warmup_ratio 0.05 \

--dataloader_num_workers 4 \

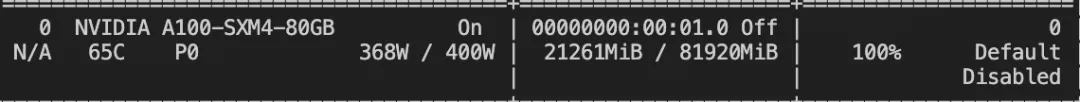

--dataset_num_proc 4训练显存占用:

如果要使用自定义数据集进行训练,你可以参考以下格式,并指定`--dataset <dataset_path>`。

{"messages": [{"role": "user", "content": "浙江的省会在哪?"}, {"role": "assistant", "content": "浙江的省会在杭州。"}]}

{"messages": [{"role": "user", "content": "<image><image>两张图片有什么区别"}, {"role": "assistant", "content": "前一张是小猫,后一张是小狗"}], "images": ["/xxx/x.jpg", "/xxx/x.png"]}训练完成后,使用以下命令对训练后的权重进行推理:

提示:这里的`--adapters`需要替换成训练生成的last checkpoint文件夹。由于adapters文件夹中包含了训练的参数文件`args.json`,因此不需要额外指定`--model`,swift会自动读取这些参数。如果要关闭此行为,可以设置`--load_args false`。

训练完成后,使用以下命令对训练后的权重进行推理:

提示:这里的`--adapters`需要替换成训练生成的last checkpoint文件夹。由于adapters文件夹中包含了训练的参数文件`args.json`,因此不需要额外指定`--model`,swift会自动读取这些参数。如果要关闭此行为,可以设置`--load_args false`。

CUDA_VISIBLE_DEVICES=0 \

swift infer \

--adapters output/vx-xxx/checkpoint-xxx \

--stream false \

--max_batch_size 1 \

--load_data_args true \

--max_new_tokens 2048

推送模型到ModelScope:

CUDA_VISIBLE_DEVICES=0 \

swift export \

--adapters output/vx-xxx/checkpoint-xxx \

--push_to_hub true \

--hub_model_id '<your-model-id>' \

--hub_token '<your-sdk-token>'

点击链接阅读原文,直达体验~

更多推荐

已为社区贡献657条内容

已为社区贡献657条内容

所有评论(0)