引言

GLM-4-9B 是智谱 AI 推出的最新一代预训练模型 GLM-4 系列中的开源版本。 在语义、数学、推理、代码和知识等多方面的数据集测评中,GLM-4-9B 及其人类偏好对齐的版本 GLM-4-9B-Chat 均表现出较高的性能。GLM-4-9B 模型具备了更强大的推理性能、更长的上下文处理能力、多语言、多模态和 All Tools 等突出能力。GLM-4-9B 系列模型包括:基础版本 GLM-4-9B(8K)、对话版本 GLM-4-9B-Chat(128K)、超长上下文版本 GLM-4-9B-Chat-1M(1M)和多模态版本 GLM-4V-9B-Chat(8K)。

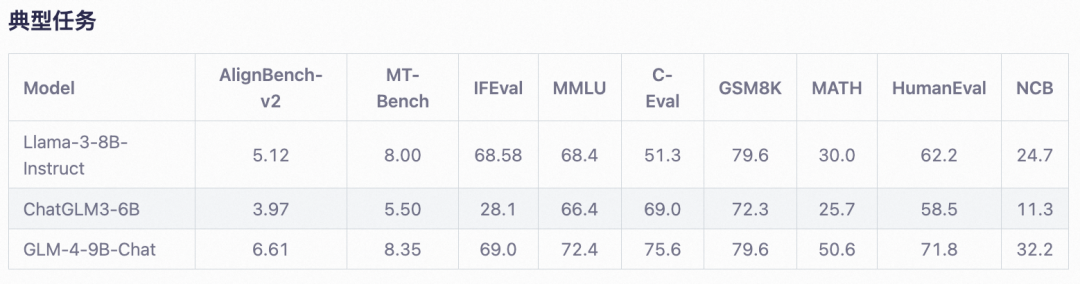

如下为GLM-4-9B-Chat模型的经典任务评测结果:

在线体验

魔搭社区使用自研开源的推理加速框架DashInfer转换了模型格式,支持在CPU上运行,并搭建了体验链接,欢迎大家体验:

https://www.modelscope.cn/studios/dash-infer/GLM-4-Chat-DashInfer-Demo/summary?from=csdnzishequ_text

同时创空间体验也支持vLLM推理,体验链接:

https://www.modelscope.cn/studios/ZhipuAI/glm-4-9b-chat-vllm/summary?from=csdnzishequ_text

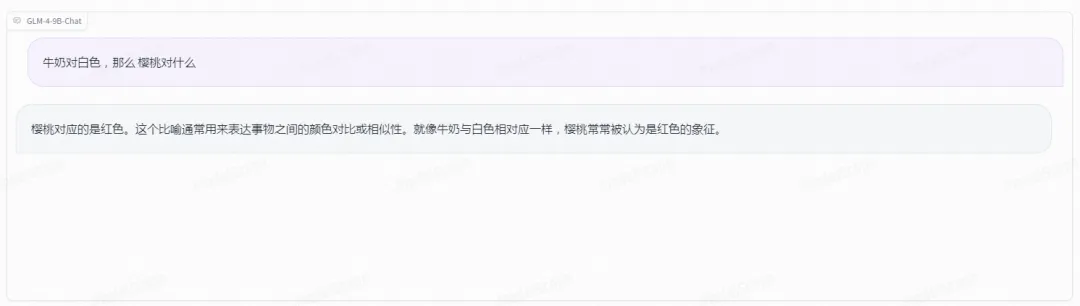

效果体验

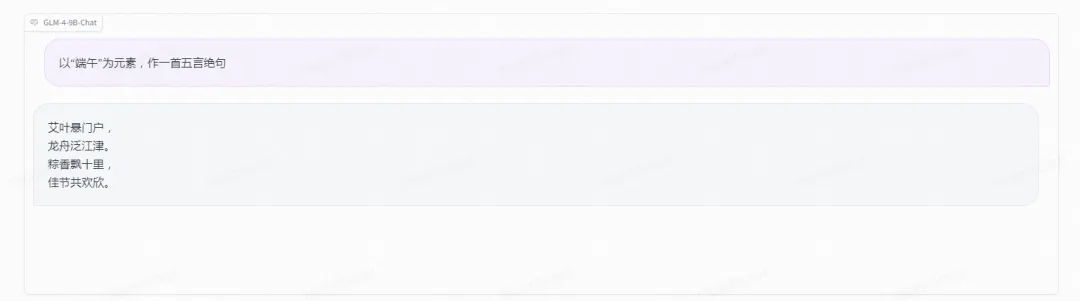

语义创作:

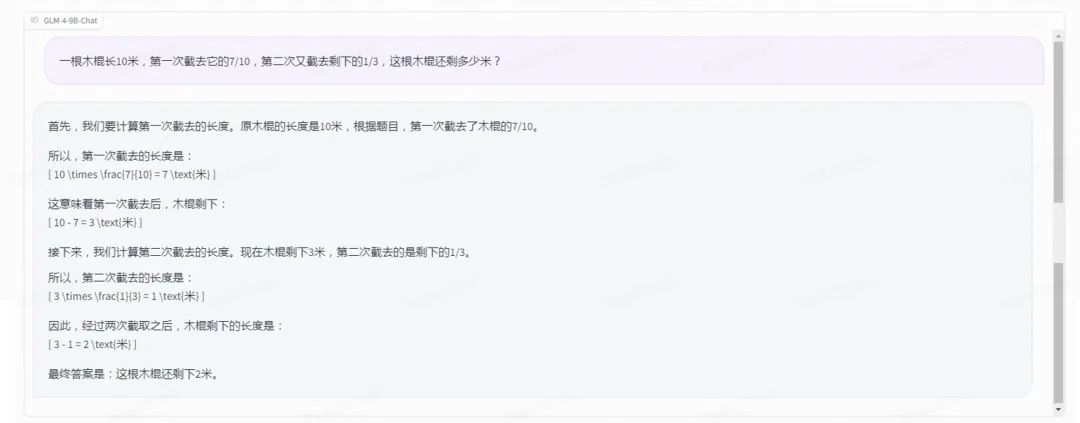

数学:

<计算题>

<应用题>

推理:

模型链接及下载

GLM-4-9B-Chat

模型链接:

https://modelscope.cn/models/ZhipuAI/glm-4-9b-chat/summary

GLM-4-9B-Chat-1M

模型链接:

https://modelscope.cn/models/ZhipuAI/glm-4-9b-chat-1m/summary

glm-4-9b

模型链接:

https://modelscope.cn/models/ZhipuAI/glm-4-9b/summary

glm-4v-9b

模型链接:

https://modelscope.cn/models/ZhipuAI/glm-4v-9b/summary

模型weights下载

from modelscope import snapshot_download

model_dir = snapshot_download("ZhipuAI/glm-4-9b-chat")模型推理

使用Transformers

大语言模型推理代码

import torch

from modelscope import AutoModelForCausalLM, AutoTokenizer

device = "cuda"

tokenizer = AutoTokenizer.from_pretrained("ZhipuAI/glm-4-9b-chat",trust_remote_code=True)

query = "你好"

inputs = tokenizer.apply_chat_template([{"role": "user", "content": query}],

add_generation_prompt=True,

tokenize=True,

return_tensors="pt",

return_dict=True

)

inputs = inputs.to(device)

model = AutoModelForCausalLM.from_pretrained(

"ZhipuAI/glm-4-9b-chat",

torch_dtype=torch.bfloat16,

low_cpu_mem_usage=True,

trust_remote_code=True

).to(device).eval()

gen_kwargs = {"max_length": 2500, "do_sample": True, "top_k": 1}

with torch.no_grad():

outputs = model.generate(**inputs, **gen_kwargs)

outputs = outputs[:, inputs['input_ids'].shape[1]:]

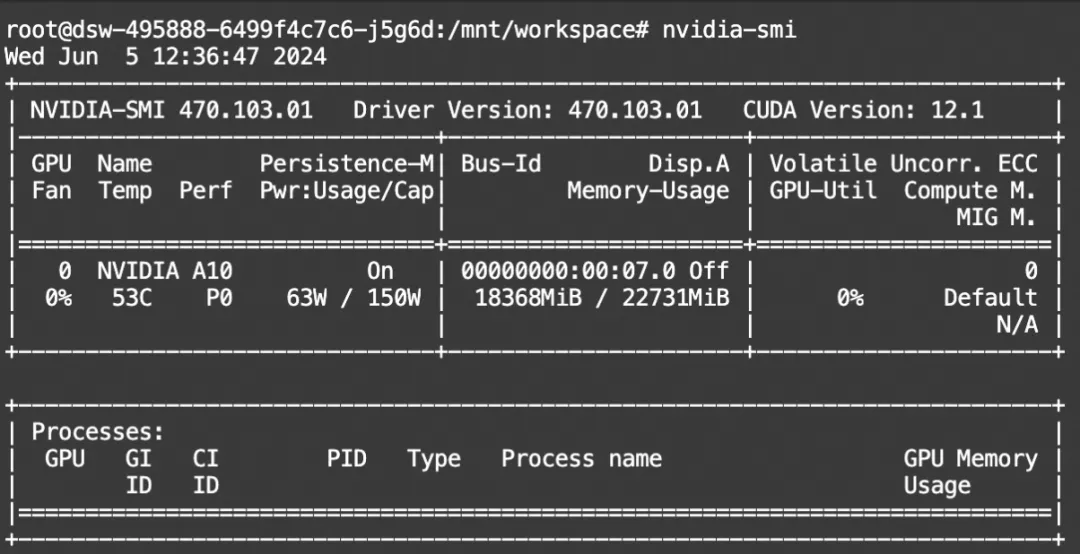

print(tokenizer.decode(outputs[0], skip_special_tokens=True))显存占用:

多模态模型推理代码

import torch

from PIL import Image

from modelscope import AutoModelForCausalLM, AutoTokenizer

device = "cuda"

tokenizer = AutoTokenizer.from_pretrained("ZhipuAI/glm-4v-9b", trust_remote_code=True)

query = '这样图片里面有几朵花?'

image = Image.open("/mnt/workspace/玫瑰.jpeg").convert('RGB')

inputs = tokenizer.apply_chat_template([{"role": "user", "image": image, "content": "这样图片里面有几朵花?"}],

add_generation_prompt=True, tokenize=True, return_tensors="pt",

return_dict=True) # chat mode

inputs = inputs.to(device)

model = AutoModelForCausalLM.from_pretrained(

"ZhipuAI/glm-4v-9b",

torch_dtype=torch.bfloat16,

low_cpu_mem_usage=True,

trust_remote_code=True

).to(device).eval()

gen_kwargs = {"max_length": 500, "do_sample": True, "top_k": 1}

with torch.no_grad():

outputs = model.generate(**inputs, **gen_kwargs)

outputs = outputs[:, inputs['input_ids'].shape[1]:]

print(tokenizer.decode(outputs[0]))

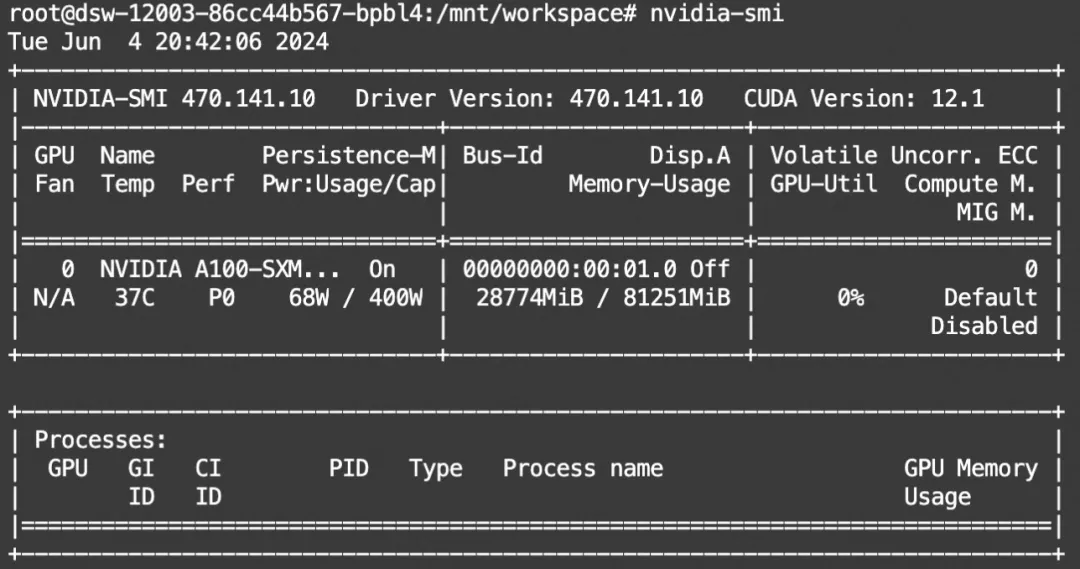

显存占用

使用vLLM推理

from modelscope import AutoTokenizer

from vllm import LLM, SamplingParams

from modelscope import snapshot_download

# GLM-4-9B-Chat

max_model_len, tp_size = 131072, 1

model_name = snapshot_download("ZhipuAI/glm-4-9b-chat")

prompt = '你好'

tokenizer = AutoTokenizer.from_pretrained(model_name, trust_remote_code=True)

llm = LLM(

model=model_name,

tensor_parallel_size=tp_size,

max_model_len=max_model_len,

trust_remote_code=True,

enforce_eager=True,

)

stop_token_ids = [151329, 151336, 151338]

sampling_params = SamplingParams(temperature=0.95, max_tokens=1024, stop_token_ids=stop_token_ids)

inputs = tokenizer.apply_chat_template([{'role': 'user', 'content': prompt}], add_generation_prompt=True)[0]

outputs = llm.generate(prompt_token_ids=[inputs], sampling_params=sampling_params)

generated_text = [output.outputs[0].text for output in outputs]

print(generated_text)使用DashInfer CPU推理引擎

DashInfer格式模型:https://www.modelscope.cn/models/dash-infer/glm-4-9b-chat-DI/summary

python依赖:

pip install modelscope dashinfer jinja2 tabulate torch transformers推理代码:

import copy

import random

from modelscope import snapshot_download

from dashinfer.helper import EngineHelper, ConfigManager

model_path = snapshot_download("dash-infer/glm-4-9b-chat-DI")

config_file = model_path + "/" + "di_config.json"

config = ConfigManager.get_config_from_json(config_file)

config["model_path"] = model_path

## init EngineHelper class

engine_helper = EngineHelper(config)

engine_helper.verbose = True

engine_helper.init_tokenizer(model_path)

## init engine

engine_helper.init_engine()

## prepare inputs and generation configs

user_input = "浙江的省会在哪"

prompt = "[gMASK] <sop> " + "<|user|>\n" + user_input + "<|assistant|>\n"

gen_cfg = copy.deepcopy(engine_helper.default_gen_cfg)

gen_cfg["seed"] = random.randint(0, 10000)

request_list = engine_helper.create_request([prompt], [gen_cfg])

## inference

engine_helper.process_one_request(request_list[0])

engine_helper.print_inference_result_all(request_list)

engine_helper.uninit_engine()微调

ms-swift已支持了以上glm4系列大模型和多模态大模型的推理、微调、量化和openai接口部署。这里我们展示使用swift对glm-4v-9b的微调和微调后推理。

swift是魔搭社区官方提供的大模型与多模态大模型微调推理框架。

swift开源地址:https://github.com/modelscope/swift

swift对glm-4v-9b推理与微调的最佳实践可以查看:https://github.com/modelscope/swift/blob/main/docs/source/Multi-Modal/glm4v%E6%9C%80%E4%BD%B3%E5%AE%9E%E8%B7%B5.md

通常,多模态大模型微调会使用自定义数据集进行微调。在这里,我们将展示可直接运行的demo。我们使用 coco-mini-en-2 数据集进行微调,该数据集的任务是对图片内容进行描述。您可以在 modelscope 上找到该数据集:https://modelscope.cn/datasets/modelscope/coco_2014_caption/summary

在开始微调之前,请确保您的环境已准备妥当。

git clone https://github.com/modelscope/swift.git

cd swift

pip install -e .[llm]

LoRA微调脚本如下所示。该脚本将只对语言和视觉模型的qkv进行lora微调,如果你想对所有linear层都进行微调,可以指定--lora_target_modules ALL。

# Experimental environment: A100

# 30GB GPU memory

CUDA_VISIBLE_DEVICES=0 swift sft \

--model_id_or_path ZhipuAI/glm-4v-9b \

--dataset coco-mini-en-2 \

如果要使用自定义数据集,只需按以下方式进行指定:

# val_dataset可选,如果不指定,则会从dataset中切出一部分数据集作为验证集

--dataset train.jsonl \

--val_dataset val.jsonl \

自定义数据集支持json和jsonl样式。glm-4v-9b支持多轮对话,但总的对话轮次中需包含一张图片,支持传入本地路径或URL。以下是自定义数据集的示例:

{"query": "55555", "response": "66666", "images": ["image_path"]}

{"query": "eeeee", "response": "fffff", "history": [], "images": ["image_path"]}

{"query": "EEEEE", "response": "FFFFF", "history": [["AAAAA", "BBBBB"], ["CCCCC", "DDDDD"]], "images": ["image_path"]}

微调后推理脚本如下,这里的ckpt_dir需要修改为训练生成的checkpoint文件夹:

CUDA_VISIBLE_DEVICES=0 swift infer \

--ckpt_dir output/glm4v-9b-chat/vx-xxx/checkpoint-xxx \

--load_dataset_config true \

你也可以选择merge lora并进行推理:

CUDA_VISIBLE_DEVICES=0 swift export \

--ckpt_dir output/glm4v-9b-chat/vx-xxx/checkpoint-xxx \

--merge_lora true

CUDA_VISIBLE_DEVICES=0 swift infer \

--ckpt_dir output/glm4v-9b-chat/vx-xxx/checkpoint-xxx-merged \

--load_dataset_config true

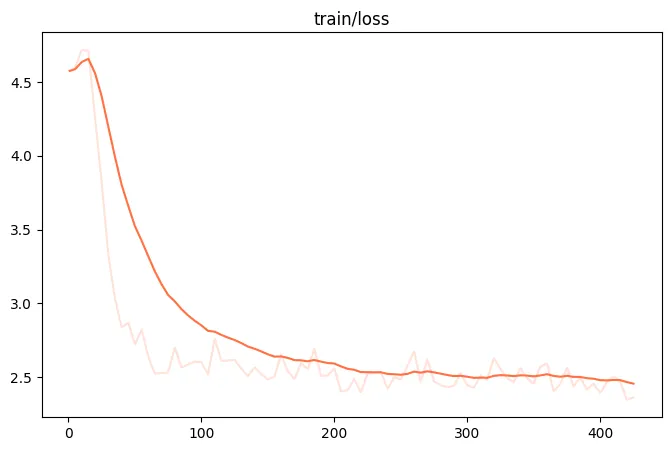

微调过程的loss可视化:(由于时间原因,这里只微调了400个steps)

微调后模型对验证集进行推理的示例:

[PROMPT][gMASK] <sop> <|user|>

<|begin_of_image|> <|endoftext|> <|end_of_image|> please describe the image. <|assistant|>[OUTPUT]A stuffed animal with a pink nose and black eyes is sitting on a bed. <|endoftext|>

[LABELS]A stuffed bear sitting on the pillow of a bed.

[IMAGES]['https://xingchen-data.oss-cn-zhangjiakou.aliyuncs.com/coco/2014/val2014/COCO_val2014_000000010395.jpg']

[PROMPT][gMASK] <sop> <|user|>

<|begin_of_image|> <|endoftext|> <|end_of_image|> please describe the image. <|assistant|>[OUTPUT]A giraffe is walking through a grassy field surrounded by trees. <|endoftext|>

[LABELS]A giraffe walks on the tundra tree-lined park.

点击链接👇直达原文

https://www.modelscope.cn/studios/dash-infer/GLM-4-Chat-DashInfer-Demo/summary?from=csdnzishequ_text

已为社区贡献660条内容

已为社区贡献660条内容

所有评论(0)